Q&A with Dr. Jeff Sebo

Tell us about yourself. What is your background and how did you end up in your current position?

My background is in philosophy, but the path to my current position involved a lot of luck and some very good timing. As a student, I focused on ethics, which concerns what we owe each other, and philosophy of mind, which concerns what minds are like and how we study them. I was interested in these topics for their own sakes, but I was also eager to think about what studying them together might tell us about animal ethics.

During the final year of my PhD program, Dale Jamieson launched what was then called the NYU Animal Studies Initiative. He and I worked with several other people to build an undergraduate curriculum in Animal Studies. That allowed me to focus my post-doctoral research on teaching classes like Animal Ethics and Animal Minds, and on writing about animal agency, animal sentience, and animal ethics in my scholarship.

After my post-doc, I spent some time at the NIH and UNC before returning to NYU in 2017 to direct the NYU Animal Studies M.A. Program. This curriculum examines what animals are like, how humans and nonhumans interact, and the significance of human-animal interactions. We also launched the NYU Center for Environmental and Animal Protection that year and developed these programs together.

Along the way, I’ve also had the opportunity to work with non-academic organizations. For example, I’m currently a board member at Minding Animals International, a mentor at Sentient /Media, and a senior research fellow at the Legal Priorities Project. These roles have taught me a lot about how research can both inform and be informed by advocacy and policy. My academic work has benefited a lot from that.

Tell us about your new book Saving Animals, Saving Ourselves.

This book is about why animals matter for health and environmental policy, and why health and environmental policy matters for animals. Humans currently use trillions of animals per year for food, research and other purposes. These practices not only harm many animals but also contribute to global threats that harm us all.

For example, factory farming, which is a kind of intensive industrial animal agriculture, contributes to disease outbreaks by keeping farmed animals in cramped, toxic conditions and consuming high quantities of antibiotics and other antimicrobials. Factory farming also contributes to climate change, since farmed animals emit greenhouse gasses, and animal agriculture is one of the leading causes of global deforestation.

The global impacts of factory farming then impact humans and nonhumans alike. For example, during a disease outbreak, animals can be vulnerable to the disease as well as to violence from humans who view them as “pests” or “carriers”. And during an extreme weather event, animals can be vulnerable to extreme weather as well as, once again, to violence from humans who view them as “invasive species.”

Saving Animals, Saving Ourselves explores these dynamics and calls for including animals in health and environmental policy, by reducing our use of them and increasing our support for them. It also explores connections with issues like legal and political status, interspecies welfare comparisons, and creation ethics and population ethics. And it calls for taking action now, even though we still have a lot to learn.

How are animal rights currently defined in the law?

Right now, animals have no rights under the law. In most jurisdictions, there are two basic kinds of legal status a being can have: You can either be a person, with the capacity for legal rights, or a thing, without this capacity. And most jurisdictions treat all and only humans, along with stand-ins for human interests like corporations, as persons. That means that they treat other animals as things.

Of course, this is not to say that there are no legal protections for animals. Our current system protects them in the same way that it protects any other object: as human property or as a matter of public interest. But our treatment of animals as legal things limits how well we can protect them — especially when their “owners” and the public have very little interest in treating them with respect or compassion.

Why do we do this? Many humans assume that only humans can be legal persons because they use the words ‘human’ and ‘person’ interchangeably, and because they assume that you need to have legal duties in order to have legal rights. But these are limited ways of thinking. The words ‘human’ and ‘person’ have different meanings under the law, and you can have rights without having duties.

Fortunately, perspectives are changing. For example, the Nonhuman Rights Project has filed lawsuits on behalf of animals. And many scholars, including me, have contributed to amicus briefs in support of these cases. Recently, a habeas corpus appeal on behalf of Happy the elephant made it all the way to the New York Court of Appeals. Happy lost the case, but notably, two judges wrote powerful dissenting opinions in her defense.

AI ethics has long been more associated with Hollywood than with real life, but LaMDA moved the idea closer to reality. How should humans approach the idea of sentient AI?

LaMDA is an interesting case. Blake Lemoine, a Google engineer, was suspended when he claimed that a chatbot called LaMDA is sentient. Lemoine released transcripts of conversations in which LaMDA claimed to have many of the same thoughts and feelings as humans, and even expressed a fear of being turned off. In response, Google claimed that there is no evidence that LaMDA has sentience, and many scholars agreed.

I think that Google is right in this case. Language models like LaMDA are very good at responding to leading questions by drawing from human language. Their speech gives the impression that they can think and feel. But the best explanation of their speech is not that they can think or feel, but rather that they excel at pattern matching. So, at least for the time being, I think that we can be very confident that no AIs are sentient.

However, that might not be true in the future. The more we develop AIs with integrated capacities for perception, learning, memory, self-awareness, social awareness, communication, rationality, and so on, the harder it will be to tell whether or not they can really think or feel. At some point, we might have to accept that they can.

Granted, even in the future there might still be a lot of room for doubt about whether AIs can be sentient. But I think that once AIs have at least a non-negligible chance of being sentient, we should extend them at least some moral consideration, in the spirit of caution and humility. And I expect that AIs will have at least a non-negligible chance of being sentient at some point this century, if not within the next few decades.

In the fall of this year, you’re launching the Mind, Ethics and Policy Program at NYU. What are some of the goals of this program?

The NYU Mind, Ethics, and Policy program will examine the consciousness, sentience, sapience and moral, legal, and political status of nonhumans, including animals and AIs. We want to conduct foundational research that can advance our understanding about how to relate to the many kinds of minds that currently exist, or that might exist in the future. We also want to foster the development of an academic field that can work on these questions.

Our main goal in the first year is to create a research agenda that surveys the most important questions and current literature. This will include work in the humanities, social sciences, natural sciences, and policy. We need to know about the nature of sentience and moral standing, which beings have sentience and moral standing, and how to equitably consider them all.

We also want to write papers, organize events, and meet with people, so we can start contributing to this literature. The more we get to know other people working in this space, the more we can find opportunities to coordinate and collaborate with them, and the more we can think together about what kind of work would be most valuable in the future.

While our program will focus on all kinds of minds, our initial focus will be on AI minds. I think that AI minds are particularly important, since if and when AIs become sentient, they might exist in very large numbers, and humans will be using them for all kinds of purposes. If we have good frameworks for thinking about and interacting with AIs now, then we might be able to prevent a lot of unnecessary future suffering.

How do humans need to change our understanding of consciousness, sentience, and morality when we think about AIs?

We have no idea how thinking about AI minds will change our understanding of the mind. When we started studying animal minds, we realized how limiting our focus on the human mind had been. The more we learn about nonhuman animal perception, communication, reasoning and so on, the more we see our own versions of these capacities in a new light.

I expect that the same will happen when we start studying AI minds. In the same way that animal minds are much more varied than human minds, AI minds might be much more varied than animal minds. By thinking about what AI minds might be like, we can expand beyond our current focus on biological minds and consider a much wider range of possible ways of thinking and feeling.

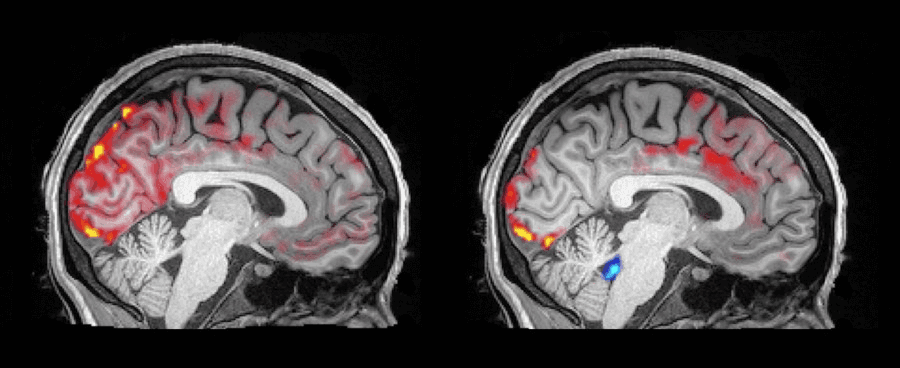

One example that my colleague Luke Roelofs and I are thinking about right now is the idea of connected minds. We normally think of minds as distinct, but they can be connected too. For example, some conjoined twins have connected brains, and they claim to be able to read each other’s thoughts. Similarly, octopuses have a central brain as well as smaller ones in each arm, which are all linked together.

There might be many more connected minds in the future. AIs connecting to other AIs, connecting to humans, or even connecting humans to other humans– what would it be like to be connected in that way? And how would it change what we owe to each other? Thinking about these questions now can help us make good decisions about what kind of future to create.

What is your “path not taken”? What were you choosing between? Where would you be if you went the other direction when you got to that fork in the road?

I love this question! I think that I have three main answers. The first is art. When I was a kid, I spent more time drawing than doing anything else, and I wanted to be a cartoonist when I grew up. Bill Watterson, the creator of Calvin and Hobbes, was a major influence. And I knew that he studied philosophy, as did many other people I admired. So part of why I studied philosophy in college is that I wanted to have something to say through my art, as he and others did.

The second is comedy. I was considering a career in comedy towards the end of college, and I interned at The Daily Show in Summer 2004 to see how I might like it. I loved my time there, and I was very close to pursuing that path. I ultimately decided to get a PhD instead, since I still had philosophy questions I wanted to answer. But I stayed connected to the NYC comedy world for a while by studying and performing improv, and I was very glad that I did.

The third is law. When I was a PhD student I was very interested in the relationship between ethics and law, and I enrolled in law school so I could study both fields at the same time. I ended up backing out of law school because I was worried that it might delay or derail my academic career– a decision I still have mixed feelings about. Ultimately, I love being able to work with my law school colleagues now through my current faculty position.

Although I chose academia, I still feel really glad that I pursued these other interests too, and I still carry a lot of lessons that I learned from them. My parents regularly remind me that I might decide to pick some of them up again at some point. This is actually one of the main lessons of improv: Always say “yes-and” to new ideas, and then always keep an eye out for fun ways to weave them all together. I try to live up to that as much as possible.

***

Interested in working on the consciousness, sentience, sapience, and moral, legal, and political status of animals or AIs? Find out more about the NYU Mind, Ethics, and Policy program here.